Nebolisa Ugochukwu Benedict

Nebolisa Ugochukwu Benedict is a Software Engineer specializing in React, TypeScript, and Node.js, he architects high-performance systems that transform core concepts into production-ready products. He eliminates architectural friction by handling the development lifecycle end-to-end, ensuring that every layer of the stack is built for type safety and structural integrity.

Article by Gigson Expert

The intersection of software process and system performance represents the most critical juncture in modern engineering. While many organizations treat the Software Development Life Cycle (SDLC) as a mere project management framework, it functions as the actual blueprint for architectural growth. Scaling an architecture is not an isolated event but a continuous result of how requirements are gathered, how code is validated, and how infrastructure is deployed. When the chosen SDLC model fails to align with the technical demands of horizontal scaling, sharding, or microservices, the resulting architectural technical debt can become an insurmountable barrier to business growth. This article provides a clear analysis of how various SDLC frameworks influence the ability of a system to expand and the strategic considerations required to align these processes with high-performance architectural goals.

The Structural Foundation of Architectural Scaling

Before selecting a development methodology, a clear grasp of the fundamental mechanics of scaling is required. Scaling is the ability of a system to handle an increasing workload by integrating additional resources. This process is categorized primarily into vertical and horizontal expansion, each carrying distinct implications for the software life cycle.

Vertical scaling, often referred to as scaling up, involves increasing the capacity of a single machine by adding CPU, RAM, or storage. While straightforward, this approach is limited by physical hardware ceilings and introduces a single point of failure. Conversely, horizontal scaling, or scaling out, involves adding more nodes to a system. This is the preferred method for cloud-native applications, but it introduces significant complexity in data consistency and network communication.

The decision to scale horizontally often necessitates a shift toward Microservices Architecture (MSA). MSA decomposes a monolithic application into small, independent services, each capable of being developed, deployed, and scaled independently. This transition is not just a technical change but a procedural one, requiring an SDLC that supports rapid, independent release cycles. According to research from the Forbes Tech Council, teams must thoroughly understand how these application architecture choices impact technical debt and business growth.

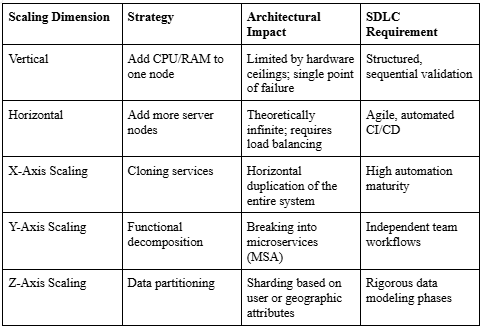

The "Scale Cube" provides a strategic framework for this growth. X-axis scaling focuses on horizontal cloning, Y-axis scaling focuses on the functional decomposition of services, and Z-axis scaling focuses on partitioning data or requests based on an identifiable variable, such as a user ID or location. Each of these axes requires a specific type of support from the development life cycle to ensure that the architecture does not become brittle as it expands.

Strategic SDLC Model Selection

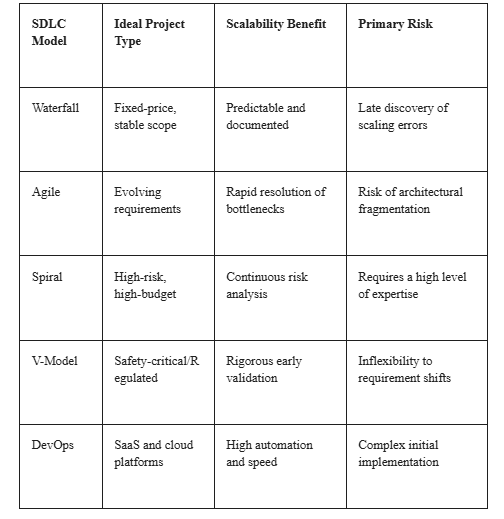

The choice of a development model determines how quickly a team can respond to performance bottlenecks. This section compares sequential and adaptive frameworks in the context of growth.

The SDLC provides the phases that guide a project from initial planning to long-term maintenance. For a system designed for high growth, these phases must focus on scalability from the very first day. The planning phase sets the objective and defines the project scope. During this stage, feasibility checks are conducted to determine if the proposed architecture can support the expected load. If a model is too rigid, like the traditional Waterfall model, the ability to adjust architectural designs based on early testing results is severely limited.

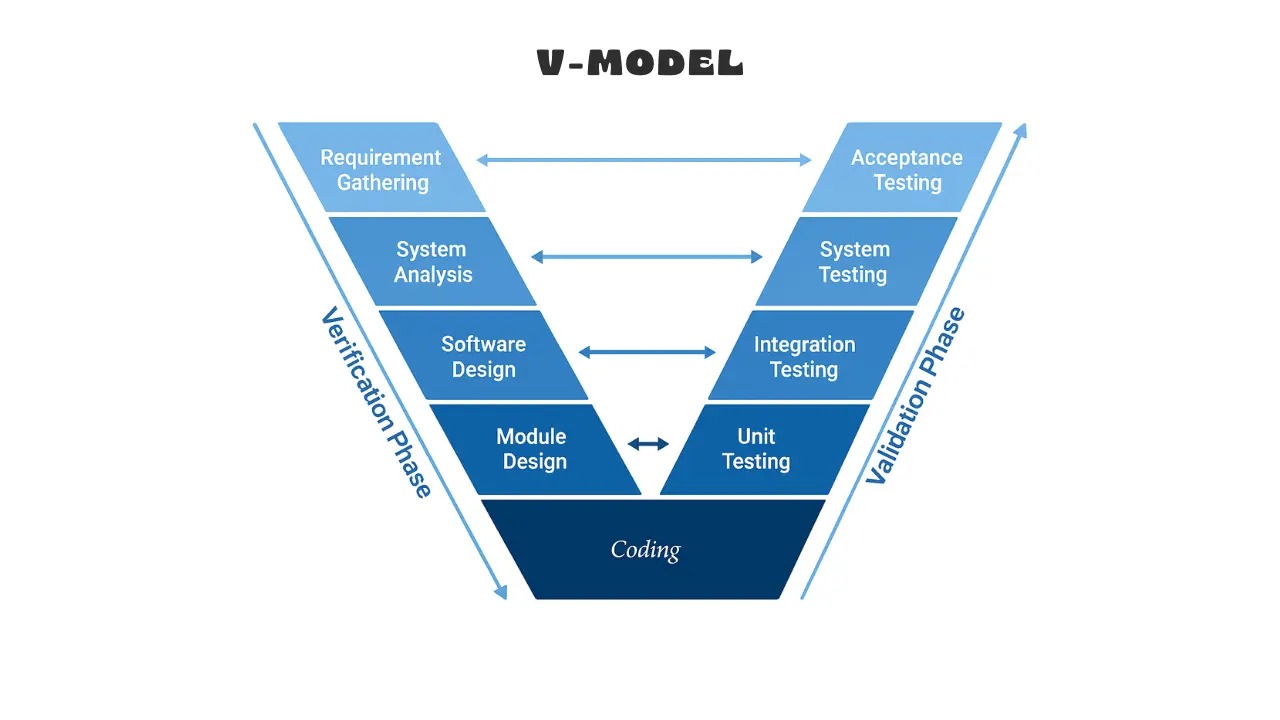

Sequential models like the Waterfall or the V-Model are best for projects with stable requirements or mission-critical needs, such as healthcare or defense systems. In the V-Model, each development stage is paired with a corresponding testing phase to ensure quality is considered from the beginning. This relationship can be visualized as a balanced flow:

This structure shows why the V-Model is more rigid than Agile: the next phase only starts after the completion and validation of the previous one. While this prevents errors early, it can lead to bottlenecks if requirements change mid-stream.

Modern scaling usually benefits from iterative or adaptive frameworks. The iterative model builds functional releases partially, which allows for early feedback. Agile methodologies like Scrum focus on continuous delivery and collaboration. Agile is highly adaptive and allows teams to address performance issues in short sprints. To maintain architectural precision in these fast-moving environments, many teams are adopting Spec Driven Development. SDD puts a clear written specification at the center of the work to reduce guesswork for both human developers and AI agents. In this context, a "spec" is not just a text document, but a formal contract, such as an OpenAPI specification for REST APIs or Smithy for service-led models. These specs serve as the single source of truth that AI agents use to generate, test, and validate code.

Microservices and the Path to Horizontal Scaling

Distributed systems offer the most flexibility for growth, but they require a specific type of operational support. This section details how microservices impact system maintenance and speed.

The adoption of Microservices Architecture (MSA) has a great influence on both maintainability and scalability. MSA allows each service to be scaled based on its specific demand. This is a stark contrast to monolithic architectures, where the entire system must be scaled even if only one component is under load. Major platforms like Amazon and Netflix transitioned to microservices to achieve superior scalability, allowing them to independently scale specific services during high-traffic periods like holidays.

However, managing these distributed systems requires a significant cultural and procedural shift. Engineering teams must adopt a DevOps mindset where the boundary between development and operations is blurred. A primary challenge in MSA is managing inter-service communication. As the number of services grows, network latency and the overhead of communication protocols can become a bottleneck. The SDLC must therefore include rigorous performance and load testing of service interfaces. Modern engineering leaders are also using architectural observability to visualize and measure how application architectures evolve. According to Forbes, this helps teams prioritize and remediate technical debt as it occurs.

Data Sharding and Partitioning Management

Scaling a database is often the most significant challenge in architectural growth. This section explores strategies for dividing data to prevent performance degradation.

While application servers can easily be scaled out, databases traditionally face issues with performance and concurrency as the volume of data grows. Sharding is the primary strategy for addressing this. It involves the division of a data store into a set of horizontal partitions or shards. Sharding can be implemented at the microservice layer or the data layer, and each method has consequences for the overall architecture.

A major risk in this process is the Hot Shard problem. This occurs when data is distributed unevenly, causing one shard to receive a disproportionate amount of load (a hotspot) while others remain underloaded. For example, if you shard a fitness app by "Age" and 95 percent of your users are between 30 and 45, that specific shard will experience memory saturation and request backlogs while other shards sit idle.

To avoid this, Microsoft Azure architecture guidelines recommend using stable, unique, and invariant shard keys. Effective shard keys must have high cardinality (many possible values) and well-distributed frequency to ensure an even load across the system.

The Economic Reality of Technical Debt

The success of a scaling strategy is as much about the organization as it is about the technology. This section examines the costs associated with integration and resource management.

Choosing the right SDLC model requires an understanding of team size, budget, and risk tolerance. Larger teams with diverse skill sets often benefit from models that allow for parallel workstreams, such as Agile or DevOps. However, organizations must be wary of the hidden costs associated with their choice of tools. Many free AI tools can lead to integration hell, which is a systemic condition where the cost of connecting components exceeds the cost of the components themselves. Research indicates that integration and deployment can consume 50 percent to 60 percent of total project costs.

A major bottleneck in the scaling process is the reliance on shared staging environments. In a microservices environment, debugging test failures in staging is often complicated by the co-mingling of commits from multiple developers. If a developer commits a breaking change to a shared cluster, it can block the work of the entire team. Successful organizations are moving away from traditional automation and toward Agentic Process Automation (APA). This evolution allows for more adaptive decision-making and helps processes keep moving even when inputs vary.

Summary of Strategic Decisions

Selecting the right SDLC model is the most important decision an engineering leader can make when preparing for growth. While sequential models provide the structure needed for regulated systems, they lack the agility required for cloud-native environments. Agile and DevOps provide speed but require high levels of automation and shared responsibility. By aligning development processes with technical goals, organizations can build systems that are not only capable of handling today's loads but are also resilient enough to meet current challenges and beyond.

Frequently Asked Questions

Which SDLC model is best for a startup looking to scale quickly?

For fast-moving startups and scaleups, the Agile or DevOps models are usually the best choice. These models prioritize rapid iterations and continuous delivery, allowing the product to grow adaptively in response to user feedback. DevOps is essential for maintaining the high uptime and fast innovation required for modern SaaS products.

How do sharding decisions impact the choice of SDLC?

Sharding decisions are complex and high-risk, meaning they benefit from models that emphasize early prototyping and risk analysis, such as the Spiral or Iterative models. If sharding is implemented at the data layer, it requires close coordination between development and database teams, which is best supported by a DevOps or Agile framework.

When should an organization choose the V-Model over Agile?

The V-Model is preferred when there are rigorous testing, safety, or regulatory requirements. If the cost of failure is high, such as in medical devices or aerospace, the structured verification and validation of the V-Model provides a level of assurance that is difficult to achieve in a purely Agile environment.

What is the main difference between RPA and APA in software scaling?

Traditional Robotic Process Automation (RPA) follows rigid, rule-based scripts that often break when inputs change. Agentic Process Automation (APA) uses AI agents that understand goals and adapt to changing conditions in real-time, making it far more effective for scaling complex, dynamic business workflows.